75+ Geospatial Python and Spatial Data Science Resources and Guides

The growth of Python for geospatial has been nothing short of explosive over the past few years. More and more you find that geospatial processes are being developed and run on Python, and new users of geospatial are riding their way into geospatial because of it.

The Spatial SQL Book – Available now!

Check out my new book on Spatial SQL with 500+ pages to help you go from SQL novice to spatial SQL pro.

Job titles and terms like Spatial Data Science are growing at a rapid rate, and there is continued effort being put into developing geospatial tools for Python.

I love geospatial Python for the flexibility it offers as well as the wide and varied range of use cases it covers. It gives you the freedom to manipulate and work with data, analyze data, visualize, and build solutions and services all in the same language.

Of course, the same challenges that exist in learning a new language, especially for geospatial, are knowing what areas to focus on and what to learn first.

Python for geospatial alone covers a wide range of use cases, everything from simple data reading and management to advanced spatial models to earth observation. And the broader Python ecosystem is even wider: machine learning, data science, data pipeline management, API access libraries, backend development frameworks, and lots more.

These are my favorite resources for getting started with and going deep into geospatial Python. As always, there is no substitute for trying things out for yourself, no matter how small just get started!

Why should I learn Python?

First, before you start learning Python for geospatial, I believe it is important to define why you want to learn geospatial Python. Certainly, adding technical skills for your job or job prospects is a valid reason, but technical skills are one part of the equation.

Before you get started, I recommend defining what value you want to provide or what types of projects you want to build using these skills. Almost like a learning mission statement. This will help by both narrowing in on the tools to learn as well as helping you define the impact you can make by learning these new tools.

And keep in mind that Python might be one part of the equation. There are lots of other pieces to the puzzle to include as well: databases and SQL, desktop tools, APIs, and applications. Python can help you do just about any geospatial operation but I think it particularly shines in areas like spatial analysis, repeatable code and data processing, developing geospatial microservices, spatial data science, and creating analysis in notebooks.

Now that you have that set, let’s jump into the list!

1. Geospatial Python – the MapScaping Podcast

The MapScaping Podcast is one of my favorite forums for the geospatial community as there are so many ideas from geospatial experts in the community that have been on the show.

This specific episode talks about why Python is such a great tool for geospatial, and for those of you who come from a GIS background, this will be a good intro to Python as the guest, Anita Graser, has developed lots of resources in Python for automating processes in QGIS.

2. Python and Geospatial Analysis – GIS Lounge

Another great primer on how Python has grown in geospatial and the use cases you can use it for, as well as explainers on why it is becoming so popular in geospatial and GIS.

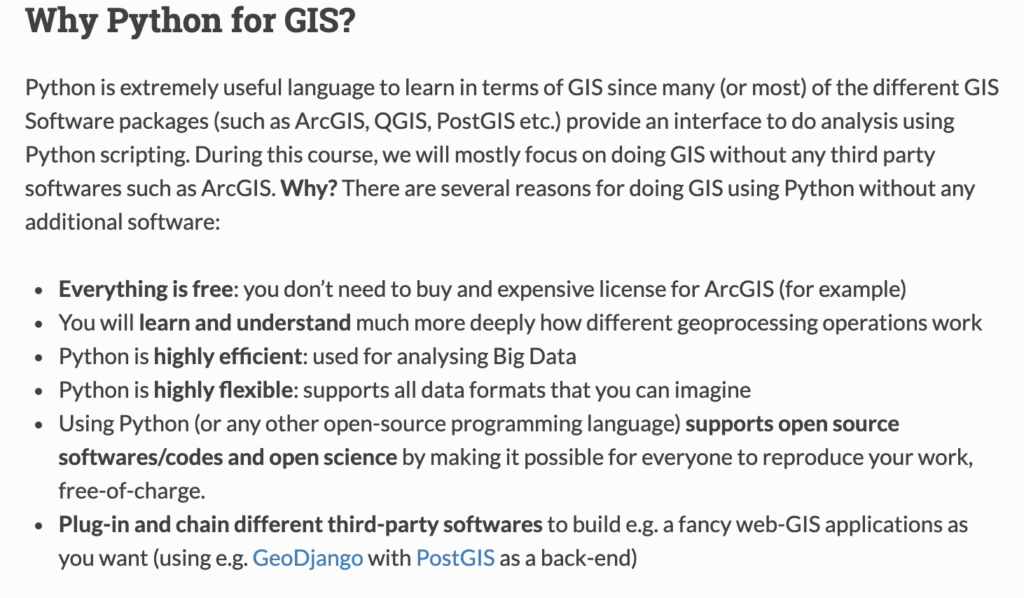

3. Why Python for GIS? – GeoPython (University of Helsinki)

I love this short and sweet primer on why you should choose to use Python for your GIS and geospatial processes. They not only have a great overview of some different libraries, but a short and sweet list of reasons why Python is such a great choice for geospatial.

apply those skills to different GIS related tasks. During the next

seven weeks we will learn how to deal with spatial data and analyze it

using “pure” Python.

4. 5 Reasons to Learn Python for Data Science

A short set of reasons why Python is great for generic data science, but still applicable to geospatial since there are so many areas of overlap that you can learn and expand into once you learned Python.

5. Spatial Data, Spatial Analysis, Spatial Data Science – Luc Anselin

While this video is not directly focused on Python, this video gives you (in my opinion) the best introduction to the challenges and reasons why practicing spatial data science is important. Of course, a lot of the analysis is done in Python, and the playlist this video is a part of has a complete series that dives into some key concepts in spatial data science. Later in the post, there will be some tutorials that will show you how to actually put these principles into practice with Python.

How can I get started?

The next step is actually getting started with Python. There are many different ways to actually use and code in Python and you will need to find the one that works best for you! This could be:

- A code editor or IDE like VisualStudio or PyCharm

- A notebook tool such as Jupyter

- On the command line interface

- Local virtual environments using virtual environments

- Using a containerized environment in Docker

Depending on how complex or customized you want to go, I would recommend starting with Jupyter notebooks since they are quite simple to start working with, and there are even hosted versions of those tools so you can get started without any setup.

These articles will show you all the ways to work with Python, install packages, and ultimately help you make those decisions for yourself!

6. Installing Python – Real Python

If you Google “how to install Python”, the articles that show up will more than likely show you how to install Python locally on your machine. This guide walks you through how to do that. This will allow you to open and run a Python file from your computer via the command line.

I generally don’t use Python this way since I usually use a development environment to actually run and interact with the code on a virtual server or in a notebook environment, but having Python installed locally will help you orchestrate those tasks.

On a side note, Real Python has some of the better tutorials around all things Python.

7. pip – The complete guide – Dinesh Kumar

Python uses packages to extend and use different tools apart from the base language and pip is one of two options to install those packages (conda being the other). Pip is generally a bit easier to use, which is why I recommend it to others. There are lots of things you can do with pip, and this is a great guide for using it.

8. Jupyter Notebook Intro – Real Python

Jupyter notebooks are by far becoming one of the most popular methods of interacting with Python. They are quick and easy to stand up, the files are easy to transfer and share, they can add different visualizations and tools from simple to complex, and they let you break code into smaller chunks.

This guide shows you the quickest and easiest way to stand up a notebook locally. There are more complex methods in virtual environments or in Docker containers, but this gets you going in a few steps.

9. Hosted Notebook Solutions

If you don’t want to use a notebook locally there are lots of options to use hosted and managed notebook services to use that you don’t have to maintain, you can just sign in and start using them! Many of them also include some pre-packaged libraries. Below are my favorite providers for hosted notebooks:

10. My Jupyter geospatial Docker container

The main way that I use Jupiter notebooks is through Docker. Docker creates a clean environment every time and I can also construct the Dockerfile to include other libraries in different languages like libpostal for address parsing. You can add other libraries and if you share the files, anyone with Docker can run from the exact same environment.

Basic Python

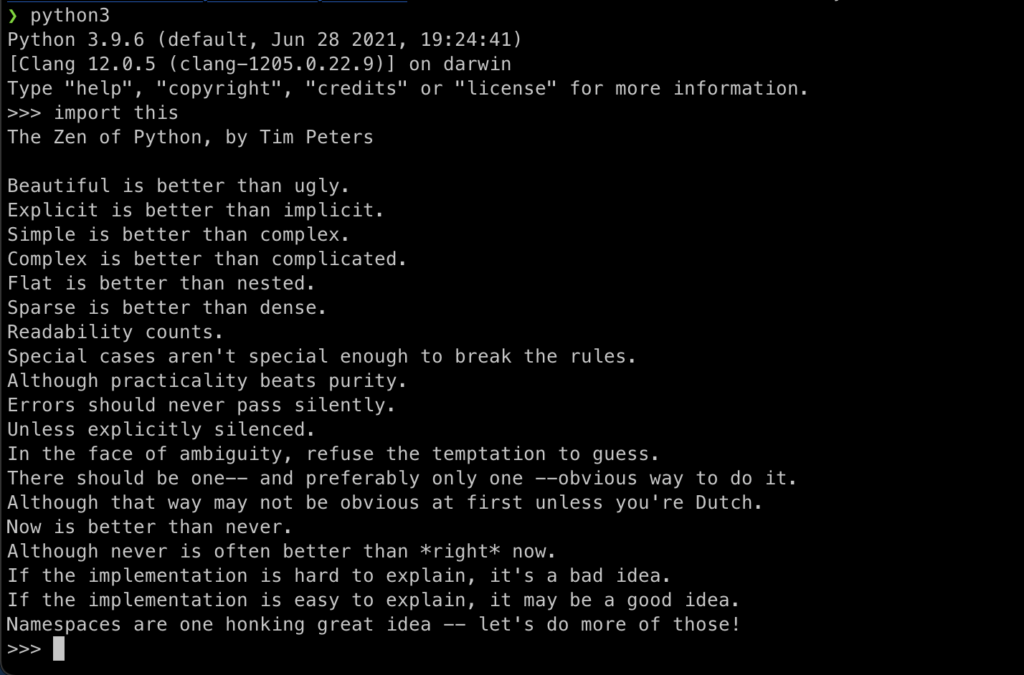

Python is a very approachable language, and its designers including Guido van Rossum and Tim Peters actually designed this in Python’s guiding principles (you can see them by running import this in Python).

As for most things in geospatial, you face the “jack of all trades, master of none” challenge. What I mean by this is that geospatial requires technical skills across lots of languages and practices: command-line tools, SQL, Python, frontend languages, and more.

Python can do many different things. There are so many use cases for it, which is why it can seem overwhelming, and we will cover some of them here. The good news is that the basic concepts of Python are super translatable. Below are some of the key concepts that are good to know no matter what you are doing with Python:

- Data types (strings, numbers, lists, dictionaries, tuples, sets, etc.)

- Conditional statements (if, while, for, try, with, etc.)

- Functions

- Modules

- Classes

11. Python First Steps – Real Python

Once again, Real Python FTW! This is an amazing resource that will take you step by step through some of the fundamental concepts in Python that you can follow along with, along with real exercises. This is definitely a great starting point for anyone looking to get started with Python.

12. Learn Python – learnpython.org

This is a more in-depth, tutorial than the Real Python one above, and goes deeper into a few different areas. There is more content, but it also has in-line code examples that you can run right in the course.

13. Python Zero to Hero – TK

I have used this post a few times just to review key Python concepts in a very very fast format. TK does a great job laying out many of the basic principles here along with some code examples in a classic quick start format.

14. W3 Schools Python Intro

I like this guide since it is a very no-nonsense approach to learning Python. The guides are quick and easy, and if you keep in mind that the focus is to learn the fundamentals of the language, not every nuance and use case, this guide can help you do that in a very short period of time.

15. Python YouTube Starter Guides

If you learn better by watching videos and live examples I recommend these two YouTube videos that walk through Python basics. Another approach would be to try out some courses on Udemy or Code Academy but if you want some great free tutorials this is an excellent place to start.

Advanced Python

Once you have mastered the basics, there are some other advanced topics that are worth diving into especially if you want to do more advanced things like developing your own library or maybe building backend services.

16. 10 Must Know Topics of Python for Data Science

This tutorial provides some quick and digestible pieces of code that explain some deeper and trickier concepts such as classes, functions, list comprehension, lambdas, sets, tuples, and more.

17. Everything About Python — Beginner To Advanced

A really long one here but I think it takes a really nice path from the very beginning to the more advanced and allows you to benchmark your progress to see where you are and what else you need/want to learn.

18. Real Python Learning Paths, Advanced Tutorials, and Core Data Science Skills

Are you surprised to see more from Real Python? Well, they have a lot more beyond the basics that provide some great insights into going deeper on specific topics. The first is the Learning Paths section that lets you see all the different possible paths you can take with Python – one of which is the Core Data Science skills – which will be key with a lot of the geospatial libraries we will be looking at later. There are also some specific Advanced Python Tutorials to focus on very targeted use cases. These courses have some free and some paid content.

- Real Python – Learning Paths

- Real Python – Advanced Tutorials

- Real Python – Data Science Python Core Skills

How can I use Python?

If you haven’t guessed by now, the answer is many many different ways. While the use cases I am focusing on here relate to the general Python skills used that overlap with geospatial, there are so many other ways that Python is used such as DevOps, creating websites, web development, GUI programming, and more. Let’s dive into some of these areas that have overlap with geospatial:

19. Data Processing and Scripting

Python is a great choice for reading and writing data files, manipulating data, reading data from databases, and scripting to change and manipulate data.

Pandas has by and large become the library of choice for working with a full range of data. The Pandas user guide and tutorials are the best place to get started and will always have up-to-date information, plus it will help you learn how to navigate their docs (one of the big skills that will help you become a more independent Python programmer). There are also two great videos that will help you get started – Pandas in 10 minutes and a longer 3-hour tutorial. Finally, there is a great cheat sheet to help you quickly reference concepts once you are up and running.

20. Building APIs

Python is a great toolkit to build API services. You can use all the same libraries and code that you have been using, and then use other libraries to develop these services. I personally believe that geospatial microservices are a key part of a geospatial stack and this is a great way to build them.

Flask is the most popular library for building APIs and there is a great starter tutorial below, as well as the docs and getting started guide for a tool called FastAPI which is similar but runs a bit faster, has a built-in GUI for development and handles async calls natively.

The final piece is a different package that contains four different components: Flask for API requests, Celery for job management for long-running jobs, Docker for containerization, and Redis for request caching. It’s a great starter kit for APIs that will have long-running jobs (generally anything more than a few seconds).

21. Connecting to databases

If you have read my previous posts you know that I am a major proponent of using spatial SQL and SQL. You can read more about the reasons in this post, but one thing to keep in mind is that many geospatial processes can be run in both Python and SQL. So how do you choose between them?

Here is my personal list (which I break all the time so these rules are not set in stone) of when to use Python vs. SQL:

I use Python when I need to

- Load and explore some data really quickly from a flat file

- Quickly visualize some data

- Translate between data formats (or I use GDAL on the command line)

- Perform exploratory spatial data analysis (always with PySAL)

- Analyze territory design problems

- Perform location allocation problems (although sometimes it is more efficient to create an origin destination matrix in SQL)

- Call APIs programmatically via Python to collect data

I use spatial SQL when I need to

- Store larger spatial datasets that I access frequently

- Perform joins across tables – spatial or otherwise

- Analyze spatial relationships

- Perform spatial feature engineering (covers almost all use cases)

- Aggregate data spatial or otherwise

- Create tile sets (although Python is still used in the API service)

- Route between lots of points

I flip flop between spatial SQL and Python when I need to

- Write custom functions to manipulate my data

- Perform geocoding (Python generally has more options)

- Re-project data (spatial SQL has the edge in this case)

- Manipulate geometries

- Make based aggregations like H3 or Quadkey

- Perform spatial clustering

- Machine learning using tools like BigQuery ML

Knowing when to use either service can really speed up your workflow, and investing some time in building a spatial database can definitely help keep all your data in one place and help you get to the fun of analyzing data much faster. Here are some different methods of connecting to a database directly from Python.

SQLAlchemy is the defacto Python method to connect to a database. It has a lot of functions that let you grab data but you will need to convert that data to a Dataframe or GeoDataFram to use it with Pandas or Geopandas.

If you go this route and you are using PostgreSQL/PostGIS then there is a great trick using the COPY command to read data to Pandas which can significantly reduce the loading speed. After establishing your connection, in SQLAlchemy you can actually use built-in functions to do this with Pandas and Geopandas (in addition to writing data back to PostGIS in Geopandas).

For other spatial databases you can also use GeoAlchemy or if you are using BigQuery there are Python libraries for working with data from BigQuery as well as creating data frames from that data, although you will have to turn the geometries from text to a valid geometry in Geopandas.

containing a geometry column in WKB representation.

22. Data Analysis

Python is amazing for data analysis and there is no better library than Pandas. Of course, NumPy and other libraries will come into play sometimes but nothing beats the flexibility and ease of use of Pandas.

Pandas – or Python Data Analysis Library – was started in 2008 and is purpose-built for data analysis. There are tons of places where you can learn how to use Pandas but my number one recommendation is to use the Pandas docs. They have in-depth tutorials and you will be also teaching yourself how to be independent later on by navigating the docs and learning how to use them in great detail. If you are looking for a basic intro to the library I recommend checking out this article too.

23. Machine Learning

Machine learning is a very big topic and one that I get asked about all the time: how to learn and apply machine learning for geospatial. (Important side note to not intermingle machine learning and artificial intelligence – this image shows you how they are related)

It’s important to keep in mind that machine learning encompasses a massive field – from research in academia, to a wide range of models for different use cases, to a deep focus on math and statistics, to the engineering of models to ensure performance – there are many different parts of machine learning and there are plenty of people that spend their careers focused on one or two very specific areas of machine learning.

All this is to say that it is unlikely that you can learn everything about all aspects of machine learning, but you can learn how to functionally practice machine learning with the many amazing tools and libraries that are available and the required and recommended steps in the process.

By focusing your goal, you can make it far more achievable and also ensure you are able to apply the special sauce you bring to the table – geospatial thinking.

Machine learning is important to geospatial because it provides different frameworks to predict, classify, and cluster data. Geospatial is important to machine learning because it can provide rich data to different models.

Below are some of my favorite resources for machine learning.

Python for Data Science and Machine Learning

Python for Data Science and Machine Learning is one of my favorite and most functional resources for learning machine learning but also Python and Pandas. It is practical, detailed where it needs to be, and has a complete set of notebooks with examples. It is a paid course but

A Complete Machine Learning Project Walk-Through in Python

This is my all-time favorite guide to learning machine learning. It is comprehensive, has example notebooks, dives into detail to all the necessary steps, and provides clear descriptions for each of the key steps in a data science project. Will is a great author and has a ton of other great content, but you can use this framework and even the code to start to build your own project and step by step.

Other Resources

As I said earlier, machine learning is a huge topic and this article, How It Feels to Learn Data Science in 2019, does a great job of explaining how complex it is and all the different paths you can possibly take. I also recommend Data Science Has Become Too Vague that talks about how “data science” has become a catch-all term and why more roles should be defined. Practical Machine Learning Tutorial with Python Introduction also has a ton of different tutorials that are short and sweet across a lot of different model types.

24. Calling APIs

Finally, many different APIs come with Python libraries to actually call APIs and then perform different tasks with that data. Some libraries will have Python libraries that they provide, such as Google Maps or Nominatim using OSM data. If not you can always call an API via the requests library and retrieve the data as JSON. This post provides a great walkthrough on how to do this. If you are loading data into a Dataframe or storing it, make sure to check the restrictions on the API as there may be a need to refresh the data or terms that don’t allow for data storage.

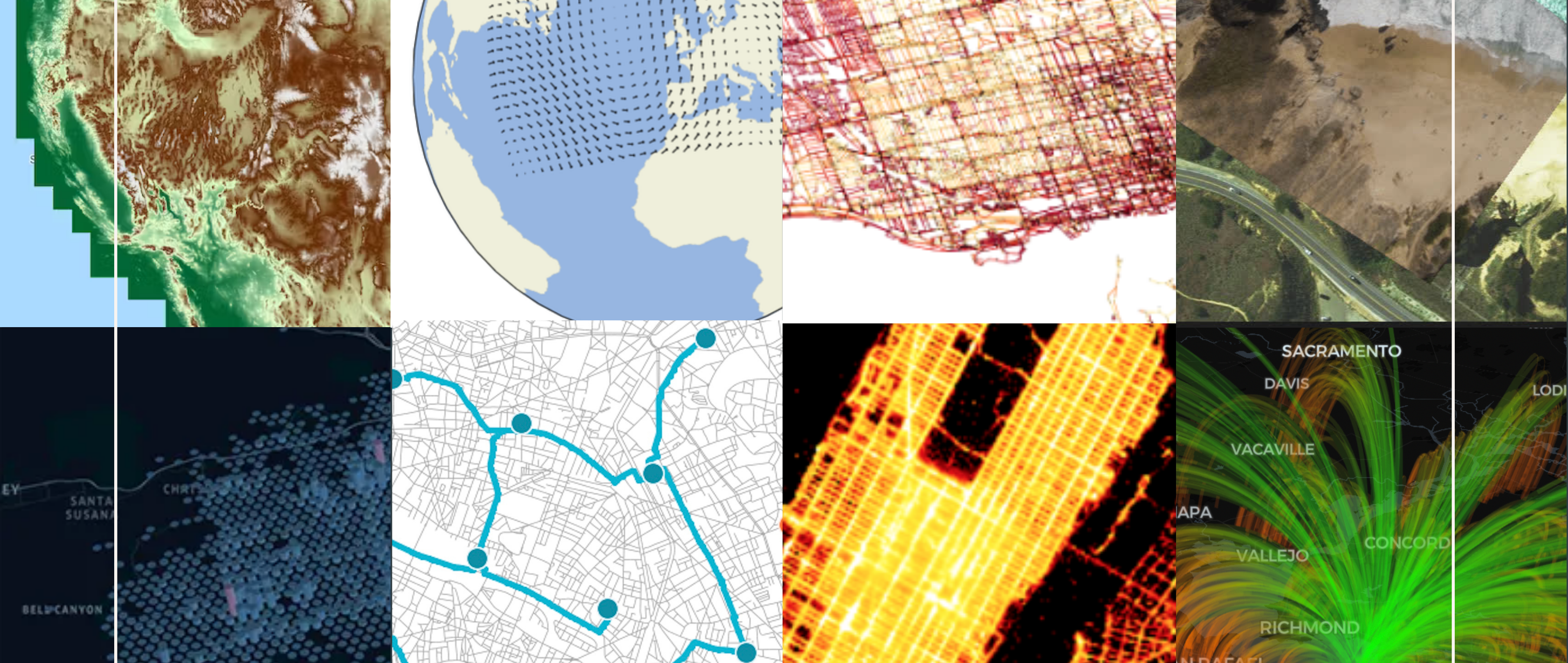

Geospatial Python Libraries

The geospatial ecosystem for Python is very large and diverse. There are many different libraries, each with its own purpose and function. Knowing which tool you need for which job is important and can help make your work more efficient and faster.

The geospatial Python ecosystem is growing rapidly, both in terms of the number of libraries and the people using these libraries. That said, there are some core components that are a part of most key workflows that you should be aware of to get started. Note that these are the libraries and tools that I use most so there are certainly way more that you should explore!

25. Geopandas

Geopandas, or the geospatial cousin of Pandas, is your geospatial Swiss Army knife for geospatial Python. You can:

- Read data from multiple formats

- Export data to multiple formats

- Visualize data

- Evaluate spatial relationships

- Reproject data

- Visualize your data

- Manipulate geometries like simplifying and creating convex hulls

- Add spatial indices for faster querying and analysis

Geopandas is, in my opinion, the best place to start learning geospatial Python. It works alongside Pandas and you can use all the functionality from Pandas with Geopandas.

It has a ton of functionality contained in common GIS workflows and many of its operations are built on top of other core geospatial Python libraries like fiona, GDAL, and shapely so you can still use that functionally without necessarily knowing all the details of those libraries (although sometimes you will want to use those libraries for specific operations which we will review later).

The best place to start learning Geopandas is, you guessed it, their docs! They have great tutorials and getting started guides alongside the docs and each guide includes a notebook that can be opened or downloaded.

26. Fiona

If your work involves changing geospatial data formats or manipulating vector data then getting to know Fiona is a good idea. Fiona is the library that opens files in Geopandas and is one less layer of abstraction if you want to process data faster.

Their docs have great guides on reading different data types and other tools. I’ve used this to convert filetypes and read data from cloud storage a lot and it works great if you need to do this in a data pipeline, but don’t want to go one level deeper into GDAL.

27. GDAL

Speaking of GDAL, the amazing and ubiquitous library has its own set of Python bindings. GDAL is embedded in so many different tools and libraries, and you can use it in your own workflows for lots of different tasks such as creating geometries, calculations, relationships, changing file types, projections, and more.

If you are doing heavy data engineering to working with large amounts of data, GDAL is a great choice because it is the lowest level library to perform these operations so you can save a lot of time by using GDAL when you scale that to many operations. I usually use GDAL at the command line level but Python is a great way to use it as well.

is a project at GitHub and served by Github Pages. If you find missing recipes or mistakes

in existing recipes please add an issue to the issue tracker.

28. PySAL

If you are serious about spatial data science and spatial modeling, then you need to know PySAL. PySAL, or the Python Spatial Analysis Library is actually a collection of many different smaller libraries. It is written and maintained by some of the best geospatial minds practicing spatial data science using sound academic principles. The modules in PySAL include:

- esda: A library to perform exploratory spatial data analysis or spatial autocorrelation

- giddy: Extension to esda for spatio-temporal data

- inequality: Library to measure spatial inequality

- momepy: “momepy is a library for quantitative analysis of urban form” or urban morphology

- pointpats: Supports the spatial and statistical analysis of point data

- segregation: Tools for measuring segregation over space and time

- spaghetti: Spaghetti supports the spatial analysis of graphs, networks, topology, and inference

- access: “Whether you work with data in health, retail, employment or other domains, spatial accessibility measures help identify potential spatial mismatches between the supply and demand of services”

- mgwr: Library to perform multi-scale geographically-weighted regression models

- spglm: “…it supports the estimation of Gaussian, Poisson, and Logistic regression using only iteratively weighted least squares estimation (IWLS)”

- spint: Tools to analyze spatial interaction

- spopt: Library to work on optimization problems such as regionalization and location allocatoin

- spreg: Tools for spatial regression models

- spvcm: “…a general framework for estimating spatially-correlated variance components models”

- tobler: Library to perform spatial and areal interpolation

- legendgram: Simple library to provide legends with histograms

- mapclassify: Library for choropleth map classification methods

- splot: Visualization library specific to spatial analysis models

There is a lot to cover here and so many different toolkits for deep analysis, and each library comes with easy-to-follow examples and notebooks. There are detailed courses that cover many of these libraries in detail which we will cover later in the post.

29. GeoPy and libpostal

Geocoding is something that can be very easy or an incredible challenge. If you are working with pristine and well-addressed data, it should be a piece of cake for you. If not, and for many of us, you may have messy data that is aggregated into one single string and includes extra data like floor numbers or other data.

Two libraries that I use for geocoding are GeoPy and libpostal. GeoPy provides a library and common API to geocode against almost every common open-source and commercial geocoders.

Libpostal is a library for parsing and normalizing street addresses using natural language parsing and open geospatial data written in C. All that said it can not only parse address strings into address parts and normalize address strings.

For example, if you have an address like 123 main st – the normalized word for “st” might return street, state, or saint. It supports lots of languages and the Python bindings allow you to use this super fast library in Python.

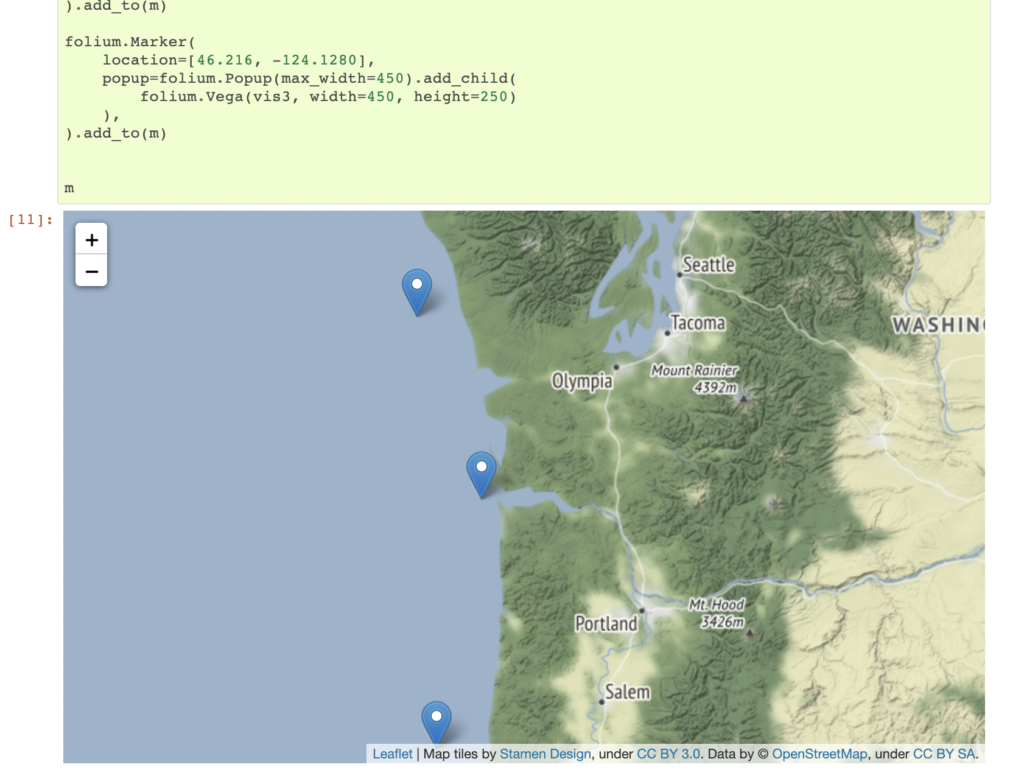

30. Folium, Kepler, and CARTOframes

For visualization, there are a plethora of options. For static maps you can use matplotlib or Geopandas, but interactive maps provide even more options. I will go into more tutorials later in the post by my three favorite visualization libraries:

- Folium: the most common interactive visualization library that uses Leaflet and other tools to provide many basic mapping functions

- CARTOframes: a fully featured visualization library to create complete dashboards and apps with filters and other features

- Kepler: the Python version of the popular KeplerGL app that allows you to do complex visualizations into your notebooks

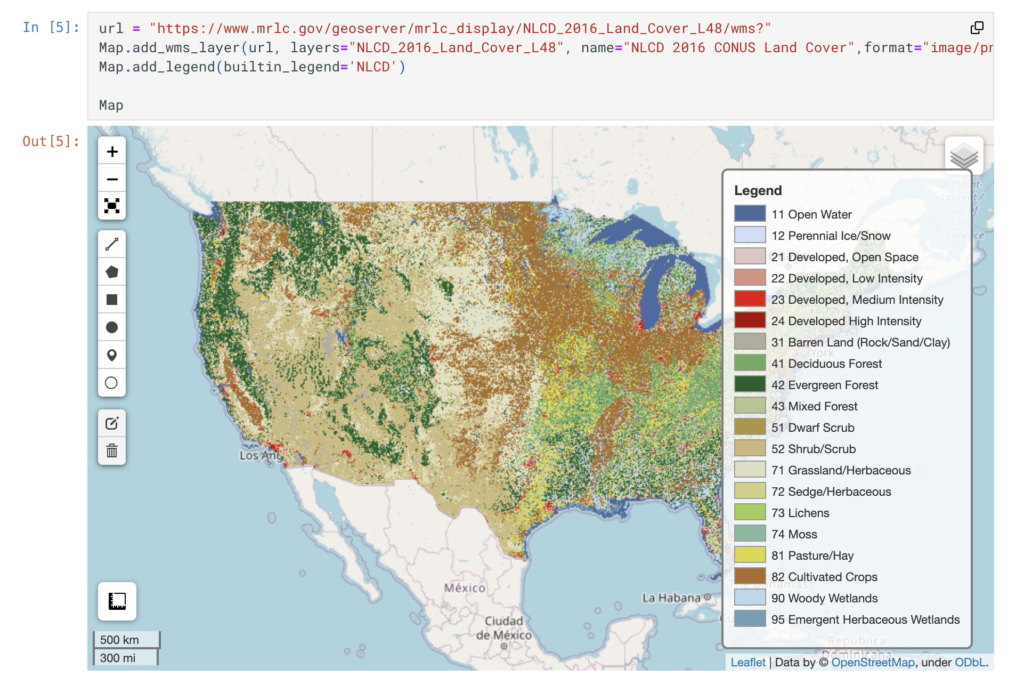

31. Leafmap

A newer package but one to keep an eye on is Leafmap. What I love about it is that it centralizes many other visualizations, geocoding, analysis, and other packages into one single tool. You can add local, cloud-hosted, PostGIS, OSM, and other data sources to the map, and it provides fully featured tools for raster data visualization and analysis.

The library is developed by Quisheng Wu from the University of Tennessee, and he has done a great job not only building this library but also adding great docs and examples along with a YouTube channel with lots of video tutorials. Follow him on LinkedIn for more updates as well.

32. Rasterio

If you work with raster data, then Rasterio will be an important package for you. It allows you to read, write, create, mask, vectorize, and perform other common operations with raster data. It transforms the data into easy-to-use Python data structures. For modeling, you will need other tools but this allows you to work with and read/write raster data quite easily.

store gridded raster datasets such as satellite imagery and terrain models.

Rasterio reads and writes these formats and provides a Python API based on

Numpy N-dimensional arrays and GeoJSON.

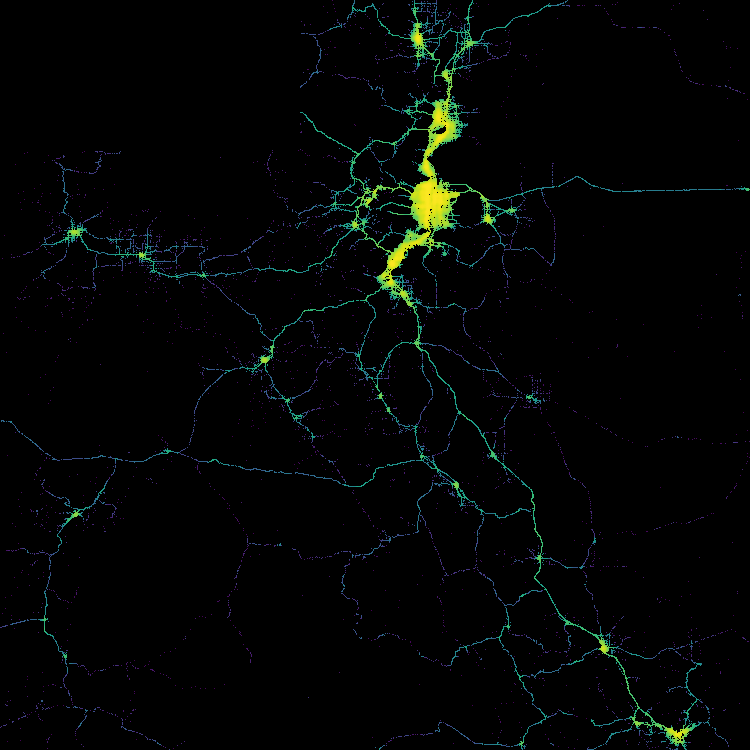

33. OSMnx

OSMnx is an incredible library developed by Geoff Boeing that not only allows for you to query and retrieve data from OpenStreetMap, but allows you to create network graphs from that data using the NetworkX library to let you do tons of interesting analysis with road networks.

You can get any sort of line data that lives in OSM to use: roads, rivers, bike routes, utility lines, etc. From that data, you can create tools to build routes, create isolines or drive/walk time polygons, even add elevation rasters. There are tons of possibilities and the best place to learn is using the example notebooks and checking out Geoff’s blog.

34. ORTools

If you are performing any analysis that includes logistics problems like the vehicle routing problem or facility location, ORTools is one of the best libraries to use. It’s a very low-level library, meaning that it performs the very specific functions to calculate optimal routes so you will need to combine it with other libraries, but the results from it are quite impressive.

35. Geospatial Python on GitHub

This guide by Qiusheng Wu has a complete list of just about every and any geospatial Python library you might ever need or want. It is the go-to guide. Bookmark this and any time you need to solve a problem check here and more than likely there is something that will help you out.

Working with spatial data in Python

One of the first things you will need to do with geospatial Python is to work with geospatial data, and these are my favorite guides and tutorials to get started.

36. Geo-Python 2021 from the University of Helsinki

I really like this free tutorial from the University of Helsinki. It does a great job of reviewing some key Python principles and other libraries, then showing how you can apply those to geospatial data. Each lesson has detailed objectives and lessons to follow.

37. Python for Geospatial Analysis

A quick and concise tutorial that talks about reading, visualizing, and working with vector and raster data in Python. Really well-annotated examples in notebooks that are ready to use. If you are familiar with Python this will get you started quickly.

38. Automating GIS Processes

The title of this course can be a bit misleading because it is absolutely one of the most in-depth free resources around for geospatial Python. This course covers Geopandas, geocoding, spatial joins, nearest neighbor, visualization, reading data, and automating data processes. It has built-in exercises and very well-documented examples. If you need a place to start, this is one of the best!

39. Spatial Analysis and Geospatial Data Science with Python & the Complete Geospatial Data Science with Python Course

If you learn better with tutorials and videos, nothing is better than these two courses from Abdishakur Hassan, one of the best writers on the topic of spatial data science and geospatial Python. If you haven’t checked out his many tutorials on Medium, then definitely do so, but his courses aggregate a lot of those concepts and lessons in one place. Unlike a lot of Udemy courses, they focus on the important content and are in-depth yet concise.

40. Geopandas Intro Video

If you want to focus on Geopandas then this is a great video to get started. One single topic but a great way to kick things off.

41. Geocoding with GeoPy

The Automating GIS Processes course has a great section that is worth calling out if you need help with geocoding specifically. This shows you how to get started with GeoPy and you should be up and running in a matter of minutes!

a really common GIS task. Luckily, in Python there are nice libraries

that makes the geocoding really easy. One of the libraries that can do

the geocoding for us is

geopy that makes it easy to

locate the coordinates of addresses…

42. geobeam

This is a great tool for Geospatial Data Engineers or anyone working with really massive volumes of data who also use BigQuery. geobeam extends Apache Beam which enables the streaming of large-scale data to another source, and when run on Google Cloud Dataflow, it can spread the process across many workers speeding up the ingestion process significantly.

Personally, I have used this to ingest, reproject, polygonize, and validate large-scale raster data (130M+ pixels and 2GB+ files), but Travis Webb, the creator of geobeam, has scaled to several TB and 25B rows of data within a few hours.

43. Performance Tips for Geospandas

A few months ago I shared some comparisons of spatial joins between Python and spatial SQL on LinkedIn, and many people shared their tips and tricks to speed up performance both in spatial SQL queries and in Python. These tips from Joris Van den Bossche and Pedro Camargo help to significantly speed up spatial joins in Geopandas using spatial indexing.

Dashboards and visualization

One of the great things about geospatial Python is that you can create visualizations within a notebook all with Python. Notebooks can be shared and re-run, and parameters can be quickly changed and adjusted to make new maps. You can make static maps that can be shared as images, interactive maps, and publishable maps as well!

44. Visualizing Data with Geopandas

While you can customize and make your own maps with libraries such as Matplotlib or other libraries, Geopandas has some built-in functions for plotting maps including legends, choropleths, projections, and layers. These are all static image maps but can be helpful if you need to quickly check your data.

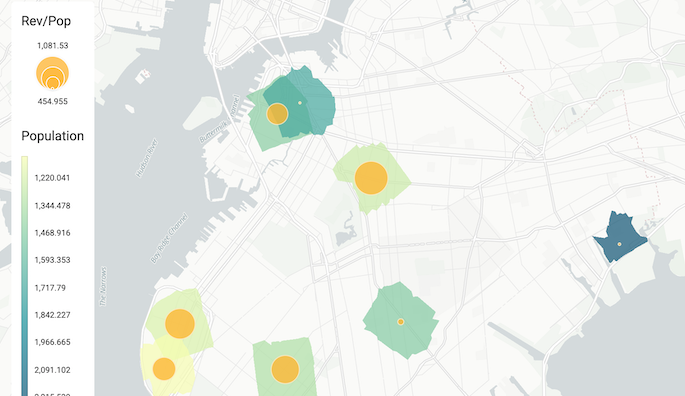

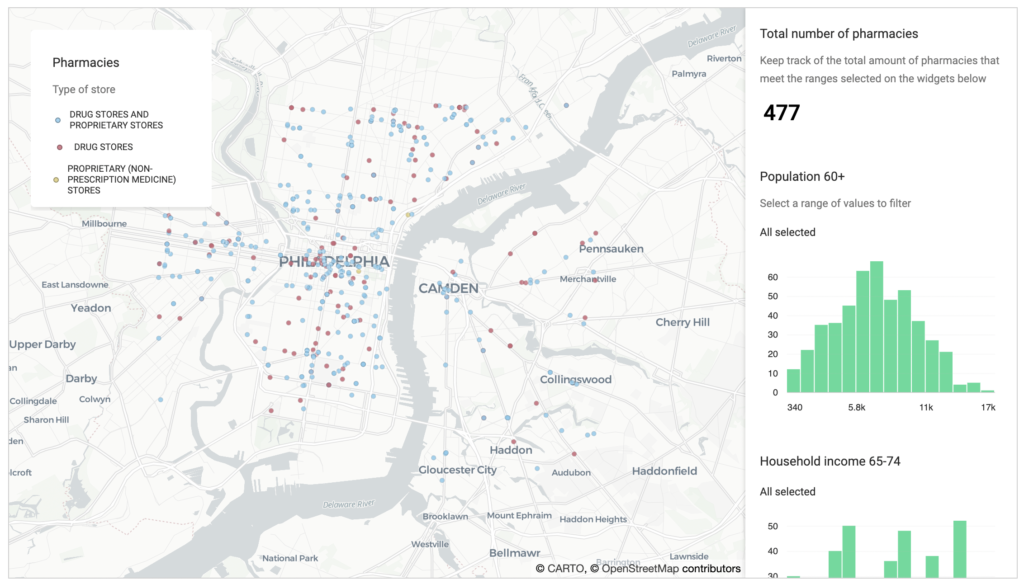

45. CARTOframes

CARTOframes adds additional capabilities for visualizing data within Jupyter notebooks. You can create interactive, static, series maps, and build full-scale dashboards with filters, widgets, animations, pop-ups, legends, and more. You can visualize local GeoDataFrames as well as data loaded from a CARTO account if you have one. It also comes preloaded with different color schemes for different data types. Below are some guides to get started for simple and advanced use cases, and some examples for analysis use cases as well:

46. Folium

Folium has long been the defacto library for creating interactive maps in Jupyter notebooks. It provides some basic functionality for mapping points, lines, and polygons using familiar tools such as Leaflet.js. You can also save your maps as HTML and publish the finished product.

47. Kepler GL

KeplerGL, the popular web interface for visualizing data, also has a package for visualizing data within a Jupyter notebook. This allows you to quickly add a GeoDataFrame to the map and then visualize it using the point and click GUI just as you would in Kepler to add 3D data, line connections, H3 cells, and more.

48. PrettyMaps

This is a really cool library that allows you to create some awesome visualizations from data in OpenStreetMap, and you can customize the styles to create very unique artistic visualizations. PrettyMaps is a basic library meant to create stylistic maps from OSM data. It is also the first package I have seen with an explicit warning against using it to create NFTs.

Machine learning and feature engineering with geospatial data

As I said earlier, machine learning is a massive topic, so for geospatial analysis, understanding how you can use geospatial data to make an impact in machine learning models is critical, along with which models you can use for which geospatial use case. In my opinion, there are a few areas where you should focus your time when thinking about geospatial data and machine learning:

- Feature engineering using geospatial data, spatial relationships, and more

- Extracting location data from other sources to use in machine learning

- Machine learning on or with geospatial data

By first narrowing your scope, you can decide which areas to focus on and when to employ each. From there you can go deep into any one of these areas to focus on what makes the most sense for you and your work.

The next section will focus on implicit spatial modeling, so that is next up but for now, we will look at the intersection of machine learning and geospatial data using Python.

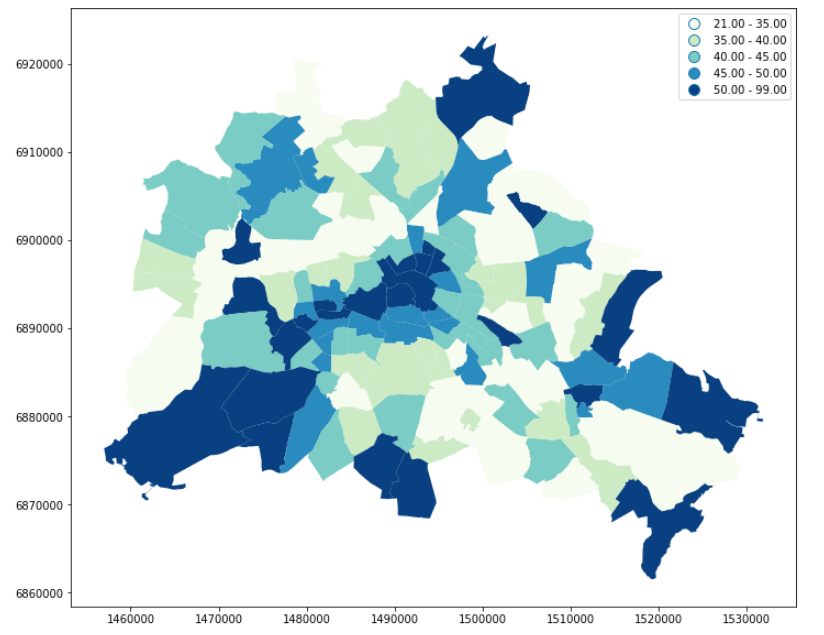

49. Spatial Feature Engineering from the Geographic Data Science with Python Book

I will cover the rest of this course in the next section, but this chapter of the course/book provides some very clear ideas and definitions on how to provide value with geospatial data by performing spatial feature engineering.

You might be asking what feature engineering and spatial feature engineering mean? If so here is a definition of feature engineering:

Feature engineering is the process of using domain knowledge to extract features from raw data.

Wikipedia

These features are then used to feed into machine learning models to help create and train machine learning models to make them more accurate. The key is that you use your domain knowledge to transform the data into meaningful features for your use case.

A simple example of this is turning a date into a day of the week or holiday if you are studying shopping patterns.

At its core, spatial feature engineering is the process of developing additional information from raw data using geographic knowledge.

Geographic Data Science with PySAL and the PyData Stack

To go into more detail, here is another quote from the course (it’s so well explained that I do recommend reading the entire chapter)

Geography is one of the most high-quality, ubiquitous ways to introduce domain knowledge into a problem: everything has a position in both space and time. And, while things that happen near to one another in time do not necessarily have a fundamental relationship, things that are near one another are often related. Thus, space is the ultimate linkage key, allowing us to connect different datasets together in order to improve our models and our predictions. This means that, even for aspatial, “non-geographic” data, you can use spatial feature engineering to create useful, highly-relevant features for your analysis.

Geographic Data Science with PySAL and the PyData Stack

Geospatial is one of the most important yet in my opinion underutilized features in machine learning today. Because of this, it is a great way to make an impact in your organization no matter if you are a data scientist learning geospatial or vice versa.

And this section of this course does an incredible job exploring different methods for spatial feature engineering and providing concrete Python examples alongside it.

50. Working with Geospatial Data in Machine Learning

I like this post because it provides some simple methods for creating features from spatial data, and it provides a perspective of someone from outside of geospatial on using geospatial data for feature engineering.

51. Introducing Machine Learning for Spatial Data Analysis

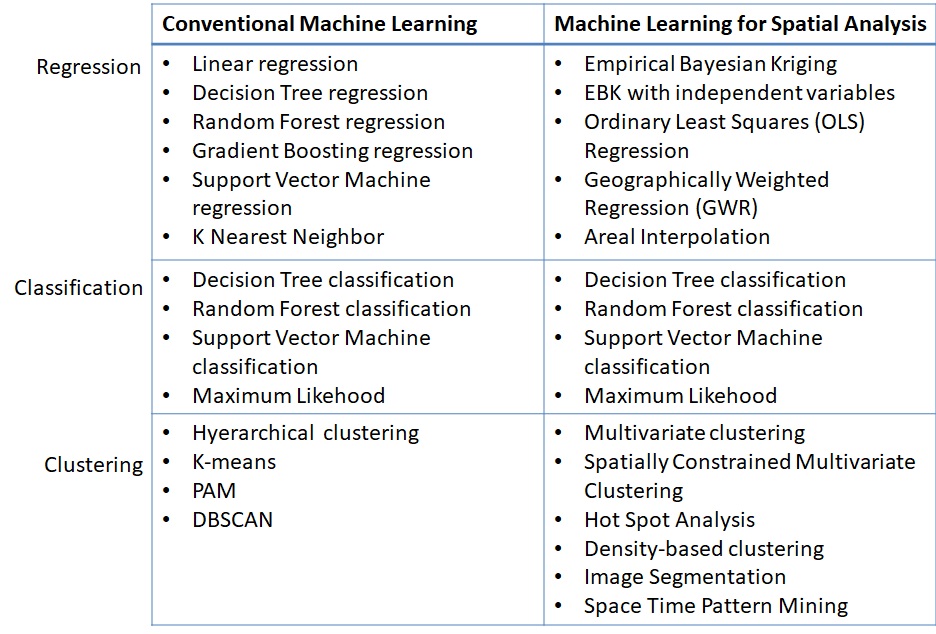

This article does an excellent job of explaining machine learning from both the perspective of a data scientist new to geospatial as well as someone in geospatial new to machine learning. This comparison chart looking at model types in machine learning compared to machine learning with spatial data is really valuable.

52. How to Extract Locations from Text with Natural Language Processing

Many times, geospatial data may be hidden inside of text or other data, and using natural language processing is a great way to find location data hidden in other data. It is a common application of machine learning, and you can use this data to collect location information from lots of other data.

Spatial modeling

53. Geographic Data Science with PySAL and the PyData Stack

If you are serious about true spatial modeling, not just machine learning with spatial data, then you need to spend some time familiarizing yourself with PySAL and the concepts and analysis it helps make available. Hands down it is the best library for spatial modeling and this book/guide is one of the best to help conceptualize yourself with it.

This book/guide/course was developed by Sergio J. Rey, Dani Arribas-Bel, and Levi J. Wolf – some of the core developers of PySAL. They have the unique knowledge from their years of academic work, development of a very in-depth Python library, and practical experience to provide great tutorials into some complex topics with detailed examples.

This is the place to start to get into spatial modeling and spatial data science. It can be tricky as these can be complex concepts, but with the guides and detail, and taking it bit by bit, you can do some really amazing work.

54. Geographic Data Science

This course has been taught as well as published online for many years by Dani Arribas-Bel and has complete guides, code, and videos across a wide range of topics.

Everything from working with geospatial data, visualization, spatial weights, exploratory spatial data analysis, clustering, and more are covered here. This is both a great starting point but also has the in-depth detail for any skill level.

55. PySAL Example Notebooks

PySAL has excellent documentation, but it can be tricky to sort through all the packages to see which you need for each use case. That said, each library in the PySAL family has fantastic example notebooks for you to check out and use. Below are some of my favorites:

- Exploratory Analysis of Spatial Data: Spatial Autocorrelation

- Regionalization, facility location, and transportation-oriented modeling

- Access package overview

- segregation Package Notebooks

Earth Observation, Remote Sensing and Imagery

Earth observation is a growing field and Python and new machine learning tools are making it even better. From image recognition of satellite imagery to modeling different phenomena with imagery, there are lots of possibilities in this space with Python.

56. Geospatial Machine Learning on GitHub

This GitHub repo/list has some great links to different machine learning and deep learning projects, datasets, papers, and more using remote sensing and satellite imagery. You will have to go through the different projects to find what works for you but this is a great place to learn the art of the possible and find some data to start working with.

57. GeoAI on Medium

This publication on Medium is one of my favorites for looking at advanced use cases for using Lidar, imagery, machine learning, deep learning, elevation data, and more. Some articles have associative code and examples, but overall these articles can give you an excellent idea of what you can do with imagery and Python.

58. leafmap and geemap

I mentioned leafmap earlier as it has a ton of tools beyond imagery analysis for geospatial, but these two libraries both developed by Qiusheng Wu have amazing built-in tools for imagery analysis. geemap is meant for those who work with Google Earth Engine and leafmap allows you to work with any imagery data. There are great tools for visualizing this data as well as performing analysis with the Whitebox UI within Jupyter for different raster operations.

The best place to get started is with the tutorials in the documentation as well as Qiusheng Wu’s YouTube page.

59. Geospatial Deep Learning

Abdishakur Hassan also has some great step by step tutorials for using earth observation and imagery data that I really enjoy:

Deep learning for Geospatial data applications — Multi-label Classification – using FastAI and PyTorch to label detailed imagery

Deep learning for Geospatial data applications — Semantic Segmentation – using the same stack as above to segment images (i.e. forest and field)

Multi-label Land Cover Classification with Deep Learning – classifying images with multiple labels using neural networks

Use Cases

Geospatial Python is just code without application, and in this section, we will dive into some different use cases for geospatial Python. Some examples have code and some do not, but hopefully, this will give you some ideas to get started!

60. Spatial Interpolation/Downscaling

Many times, spatial data will be reported at a higher resolution, such as zip codes, which is not as useful for spatial analysis compared to smaller more granular geometries. There are complex methods to do this with multiple data inputs such as those described in this blog post from CARTO, but there is also a great package from PySAL called tobler that can help us with this problem as well as use other input data to help solve it.

61. Exploratory Spatial Data Analysis

Also known as spatial autocorrelation and Moran’s I, exploratory spatial data analysis is a very easy way to explore if there are spatial relationships in data and see how they are organized using sound statistical methods. The PySAL package esda provides some very simple methods to accomplish this and the outputs are very easily explainable to many audiences, even those that are not familiar with spatial analytics, which is why it is one of my go-to analyses.

62. Spatial Clustering

Spatial clustering is similar to other clustering techniques but uses the spatial relationship along with other data to identify clusters of activity. There are several different resources to actually perform spatial clustering including using DBSCAN and HDBSCAN. The Geographic Data Science Book with Python also has a specific chapter on the topic, and finally, there is a great use case on using this data with highly dense data from CARTO, looking at clusters of activity in New York City parks.

sklearn clustering suite has thirteen different clustering classes

alone. So what clustering algorithms should you be using? As with every

question in data science and machine learning it depends on your data. A

number of those th…

63. Building a Spatial Model to Classify Global Urbanity Levels

Classification is another key problem in data science, and a classic problem in geospatial work is classifying areas. In this use case, data such as population grids, road networks, and nighttime lights are used to classify the entire world into different urbanity levels.

64. How to Enrich POS Data to Analyze & Predict CPG Sales

This use case dives into how you can use spatial data to predict potential sales of different products across space using a very popular Kaggle dataset, the Iowa liquor sales dataset. This data has data on specific product SKUs in Iowa, and using spatial data we can predict what sales might be like in other areas where stores are not present, or model sales in an entirely new state using spatial data.

65. Modeling the Risk of Traffic Accidents in New York City

This article talks about how you can use a common geospatial dataset such as traffic accidents (in this case in New York City) to create a model to categorize risk to certain areas using machine learning models.

66. Geospatial Natural Language Processing

I like this use case as it covers taking a text-based datasets, crime reports in Madison, WI, and turning them into usable geospatial data. But it also shows how you can use the same technology to build very cool solutions, such as a call center that can work to pinpoint locations using real-time call transcripts in a customer support setting.

67. Creating Territories

Territory creation is a very common use case and has all different sorts of forms and flavors. From contiguous territories to clusters of activity to grouping overlapping regions to a central hub, these are all common use cases for creating territories. PySAL has some great territory solvers in its spopt package with notebooks to support several different regionalization (or contiguous territory problems) as does the Geographic Data Science with Python book. Lastly, there is a great use case from CARTO using genetic algorithims to create and assign facilities to different sales territories, or individual sales reps using constraints for multiple problems.

Interactive online version:

Effective methods to learn from data recognize this. Many questions

and challenges are inherently multidimensional; they are affected, shaped, and

defined by many different components all acting simultaneously. In statistical

terms, these…

68. Network Analysis with OSMnx

I really love OSMnx and there are some awesome use cases here to work with data from OpenStreetMap and solve really interesting problems with network data such as creating networks with elevation data in them to travel time isochrones to network-based spatial clustering.

- Draw an isochrone map with OSMnx

- Download any OSM Geospatial Entities with OSMnx

- Node elevations and edge grades

- Custom filters and other infrastructure types

- Network based spatial clustering

69. Location Allocation with PySAL

Personally, I think location-allocation problems are some of the most interesting problems in geospatial because they require both complex data and analysis but solve very practical and relatable problems. For example, location-allocation can be used to answer the question of “where should I place a meeting fire station to cover the most residents without overlapping coverage from other stations”. Location allocation can be used in the public sector to locate new services, by logistics companies locating new warehouses, to delivery companies adding new ghost kitchens or ghost grocery stores, to communities wanting to build new Little Libraries.

I had been watching the development of the spopt package for some time and was very excited to see the additions by Germano Barcelos that added functionality for many of the core location-allocation problems with supporting notebooks.

- Location Set Covering Problem (LSCP) – Minimize the number of facilities needed and locate them so that every demand area is covered within a predefined maximal service distance or time

- Maximal Coverage Location Problem – Maximize the amount of demand covered within a maximal service distance or time standard by locating a fixed number of facilities

- P-Center Problem – To “locate a police station or a hospital such that the maximum distance of the station to a set of communities connected by a highway system is minimized”

- P-Median Problem – Locate a fixed number of facilities such that the resulting sum of travel distances is minimized

70. Data Clustering in San Francisco Neighborhoods

A great step-by-step tutorial that not only shows how to grab geospatial data from an API but also use different methods to cluster data in different categories and neighborhoods based on similar POIs.

71. Classification of Moscow Metro stations using Foursquare data

Similar classification project as above using Foursquare data to classify neighborhoods around Moscow metro stations.

72. Connecting and interpolating POIs to a road network

A detailed use case with examples and other links to understand a common geospatial problem, snapping points and other data to road networks.

73. A Beer Lover’s BFF? An Introduction to Geospatial Interpolation via Inverse Distance Weighting

Another interesting and specific use case to study distance weighting using breweries to find the center of any state’s brewery scene.

74. Geospatial Operations at Scale with Dask and Geopandas

If you are really in love with Python and Geopandas but want to scale to larger datasets, then this is a great post for using Geopandas with Dask, which helps partition dataframes into many dataframes to speed up operations.

75. Improving Operations with Route Optimization

I think one complex thing about routing or optimization problems is conceptualizing them and understanding a real-world use case, and this is one of the few I have found that does that. This article talks about solving the Vehicle Routing Problem at scale testing different methods such as recursive DBSCAN and Google ORTools.