GeoParquet will be the most important tool in modern GIS in 2022

Yes, (another) file format is the most important new development tool for the modern GIS stack in 2022. There is a ton of hype around tools, processes, and methods for making geospatial analysis faster and at scale, but what if the data (in this case vector data) was better formatted for different query performance both locally on your machine and in cloud data warehouses and data lakes?

GeoParquet is eloquently simple in that it has a few simple advantages, but solves several major hurdles for modern GIS.

My goal for this post is to explain why this effort is the most important for modern GIS in 2022 and why I think it is a true game-changer for those in many different kinds of geospatial workflows.

The Spatial SQL Book – Available now!

Check out my new book on Spatial SQL with 500+ pages to help you go from SQL novice to spatial SQL pro.

What is Parquet?

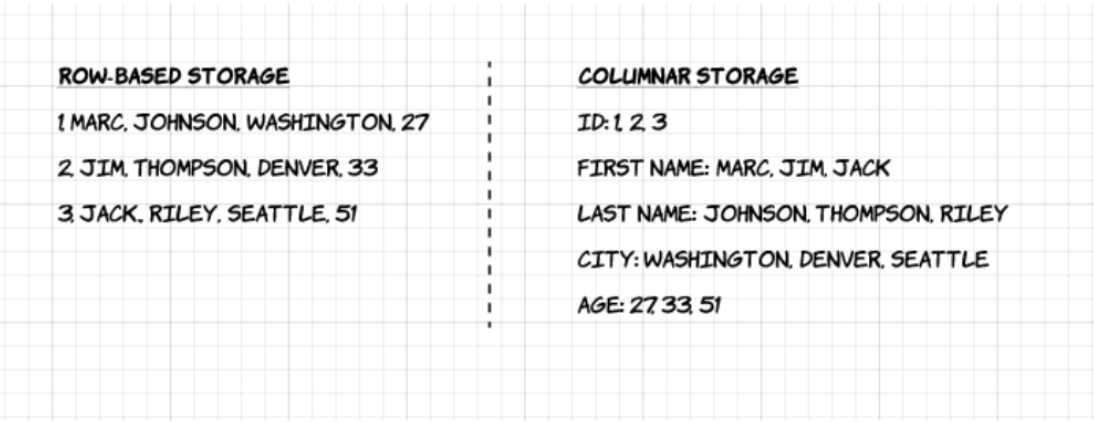

Parquet is a data format that supports columnar data storage, compared to row data storage in other formats like CSVs. The below image from Upsolver shows a great comparison of row data storage vs. columnar storage:

Parquet has a few different advantages, but the ability to store, query, and compress different data types by column provide some major advantage gains. GeoParquet looks to build on that to provide support for point, line, and polygon geometries.

In fact, some of the tests in this article show that when compared to reading a CSV, you can make gains anywhere from 10x to 50x speed.

What is GeoParquet?

GeoParquet is a new file format using Parquet with support for geospatial vector data (i.e. points, lines, and polygons) that uses the same underlying technology as Parquet. This is only for vector data and is being developed as a project under the Open Geospatial Consortium and supported by many companies in the geospatial space.

Chris Holmes from Planet and a board member at OGC has shared some great resources on GeoParquet and why it matters. I’ll share some of the highlights below but I definitely recommend these resources as well as this post from Javier de la Torre who has been involved in the effort as well.

Note that this effort is similar but not the same as geo-arrow-spec which has similar goals, but GeoParquet had a few differences that are summed up in this issue.

Really? Another file format? How can GeoParquet help me?

Yes, really. I know that GIS has more than enough file formats and transferring between file formats can be a headache-inducing process, but GeoParquet had the capabilities to alleviate and solve some other issues upstream that make it worth it.

The reason I say this focuses on two main points:

Speed, local and in the cloud

Parquet is a very fast file format for loading and analyzing data, mainly due to the columnar data storage methods. This post highlights some of the different speed comparisons that can be gained from using Parquet, where they saw reading speeds 10x to 50x using the combination of tools they detail compared to Pandas and CSV files.

Of course, this depends on many factors such as the density/sparsity of the data as well as amount of data you want to use or columns you use. That said I think this has some interesting implications for GeoParquet for analyzing spatial relationships and also visualizing data (Chris Holmes discussed building in overviews for visualization in his post as well).

This enables very fast performance no matter if you are using GeoParquet locally or in cloud environments, one of the core principles of modern GIS.

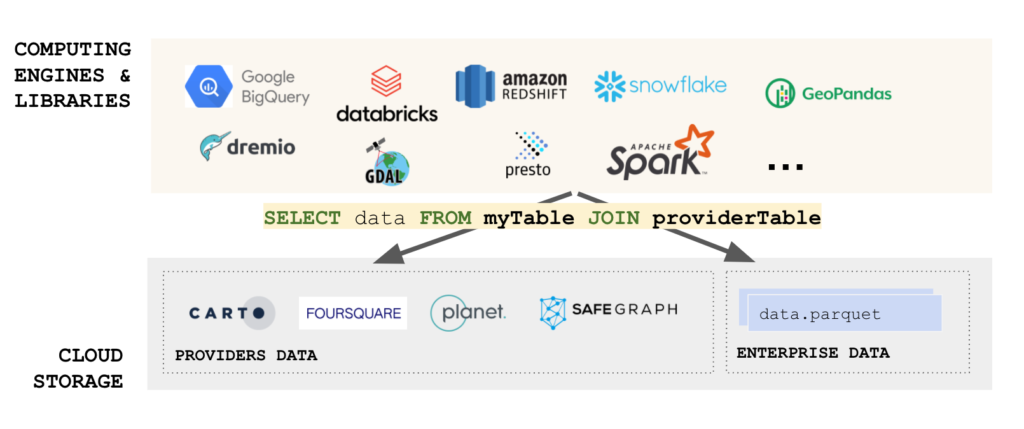

Interoperability across any tool, specifically data warehouses

A key challenge of GIS and modern GIS has been the sheer number of data types for both raster and vector data types. Using these files is one challenge but loading them into databases or data warehouses is another key challenge.

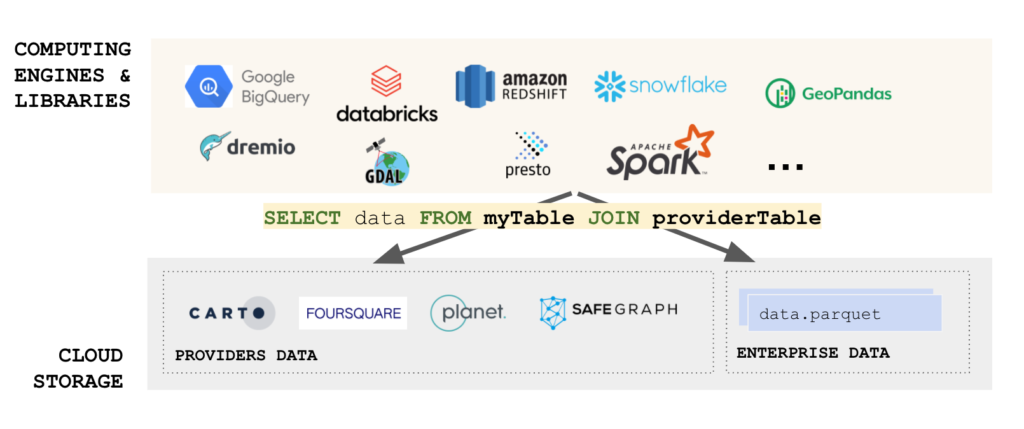

For many cases, GeoParquet breaks this need, as a core idea behind its development is to ensure that GeoParquet can be used by any of the spatially-enabled data warehouses such as BigQuery, Snowflake, Redshift, or Databricks. Even PostgreSQL is getting on board.

Even better, is that these data warehouses allow for you to read remote files, and Parquet is one of those formats. This allows you to dump your files into data storage like AWS S3 or Google Cloud Storage, and immediately use those same files using spatial SQL in the corresponding cloud data warehouse. This can be done locally with other services too.

No ETL, just L. And in true modern GIS style, users locally and in the cloud get the same benefits.

So GeoParquet will ultimately help solve:

- Problems with analyzing large scale data

- Ability to load and immediately use data in a variety of tools

- Easily share and deliver data or quickly use data from data providers

- Use the same data format no matter the spatial database or data warehouse

Okay I get it. How can I start using it?

GeoParquet isn’t widely available yet and is still under development. Check out the full repository here and contribute as you see fit, or add any issues for the team to review! The full release will come soon, but the repo also has some of the guiding principles in it too!

All the development is going to happen out in the open, so get involved and get ready for, what I believe, will be a truly innovative and transformational tool.